| Artificial Intelligence Data Overview | |

|---|---|

| Concept Origin | Karel Čapek (1921) |

| First Physical Robot | Gakutensoku (1929) |

| Foundational Evaluation | The Turing Test (1950) |

| Initial Term Coiner | John McCarthy (1956) |

| First Functional Chatbot | ELIZA (1966) |

| Algorithmic Foundation | Backpropagation (1969) |

| Primary Architecture | The Transformer (2017) |

| Global Market Value | $539.45 Billion (2026) |

| Projected Market Value | $3.49 Trillion (2033) |

| Projected Job Automation | 300 Million Roles (2030) |

| Top Hardware Provider | NVIDIA |

| Primary Programming Language | Python |

| Leading Enterprise Model | Claude 4.5 Sonnet |

| Open-Weight Disruptor | Llama 4 Scout |

| Ultimate Objective | Artificial General Intelligence (AGI) |

| European Legislation | The EU AI Act |

| Official Regulatory Body | European AI Office |

| Hardware Specifications | NVIDIA Corporate |

Artificial Intelligence refers to the advanced computational paradigm where non-biological machines are specifically engineered to mimic, replicate, and surpass human cognitive functions. Composed of complex mathematical architectures, vast neural networks, and massive silicon hardware clusters, these systems process immense volumes of unstructured data to generate predictive intelligence, autonomous actions, and original creative output without explicit human programming. Today, this technology is utilized globally to automate complex tasks across software engineering, medical diagnostics, autonomous robotics, and financial forecasting.

The cultural conceptualization of this paradigm predates the invention of the modern microprocessor. It was notably marked in 1921 when Czech playwright Karel Čapek introduced the word robot to the global lexicon. However, the formal academic transition from mechanical automatons to computational logic occurred in the mid-20th century. Theoretical pioneers like Alan Turing and John McCarthy established the foundational philosophy of the field in the 1950s. After decades of fluctuating academic funding and severe hardware limitations, the discipline experienced a profound renaissance catalyzed by the introduction of deep learning, vast datasets, and highly parallelized Graphics Processing Units (GPUs).

This comprehensive report explores the immense modern scope of the worldwide artificial intelligence industry. We will analyze the historical evolution of neural networks, the precise mathematical mechanisms powering machine learning, the fierce corporate battles over silicon hardware dominance, and the profound societal transition toward Artificial General Intelligence.

1. Early History and Robotics

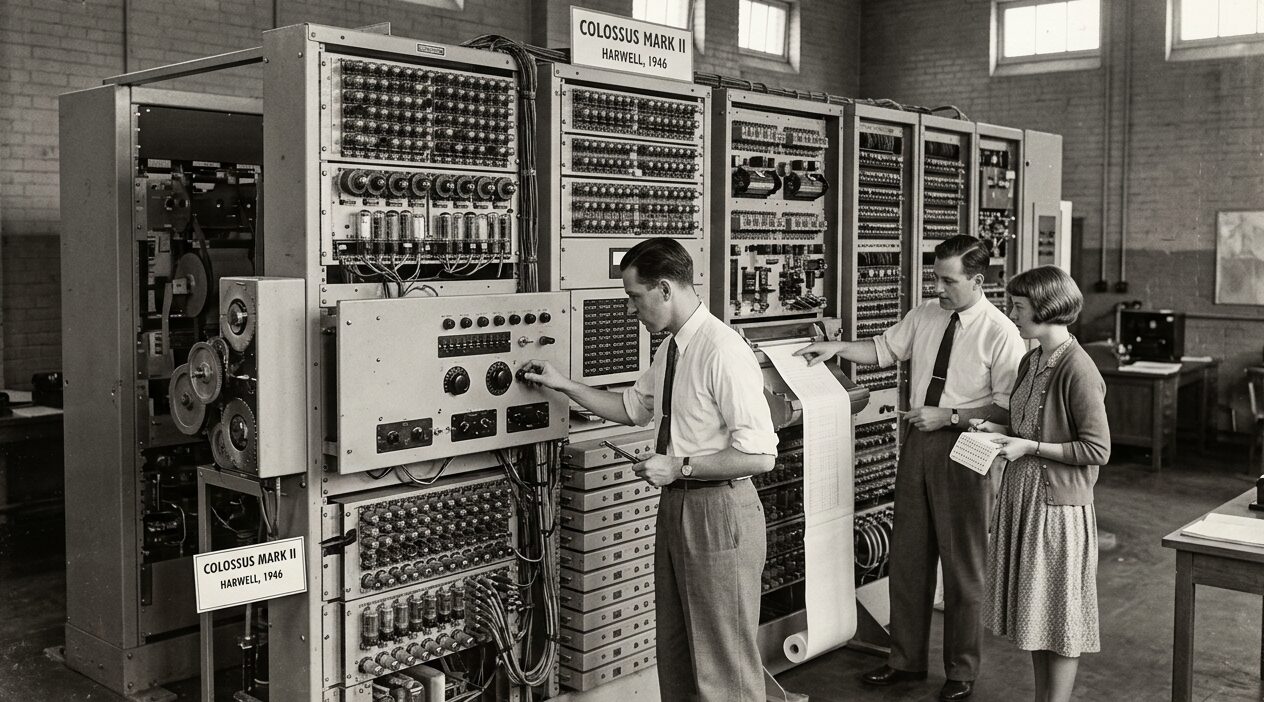

The cultural inception of this transformative paradigm was marked in 1921 when Czech playwright Karel Čapek released the science fiction play Rossum’s Universal Robots. This literary work formally introduced the word robot to the global lexicon to describe artificial people. Practical mechanical experimentation followed shortly thereafter, culminating in 1929 with the construction of Gakutensoku, the first Japanese robot, designed by professor Makoto Nishimura. The formal academic transition from rigid mechanical automatons to true computational intelligence occurred in the mid-20th century. In 1949, prominent computer scientist Edmund Callis Berkley published Giant Brains, or Machines that Think, which controversially compared early computing models directly to human cognitive functions.

2. The Turing Test

The defining theoretical pivot occurred in 1950 when British mathematician Alan Turing published his seminal academic paper Computing Machinery and Intelligence. In this foundational document, Turing posed the fundamental question regarding whether machines could think. He proposed a pragmatic method for evaluating machine intelligence, a method known globally as the Imitation Game or the Turing Test. Turing’s operational approach deliberately bypassed philosophical debates regarding true machine consciousness. Instead, he focused on whether a machine could exhibit intelligent behavior that was functionally indistinguishable from that of a human operator across a text-based interface.

3. The Dartmouth Conference

The scientific discipline was formally established in 1956 at the legendary Dartmouth Summer Research Project. This influential project was organized by John McCarthy, who officially coined the specific term artificial intelligence. This historic conference gathered the foundational figures who would shape the trajectory of the entire field for decades. Marvin Minsky advanced early research into human cognition, while Allen Newell and Herbert Simon developed some of the very first problem-solving logic programs. Furthermore, Arthur Samuel pioneered the foundational concepts of machine learning by creating a checkers program capable of independent learning and steady self-improvement through operational experience.

4. Early Milestones and the AI Winter

This era of early academic optimism yielded significant structural milestones. In 1966, researchers at the Massachusetts Institute of Technology created ELIZA, the first functional chatbot capable of simulating a Rogerian therapist using basic linguistic pattern-matching rules. During that same year, the Stanford Research Institute developed Shakey the Robot. Shakey was the first mobile machine capable of actively sensing its physical surroundings and logically reasoning about its physical environment, proving that systems could physically interact with the real world rather than strictly processing numerical data.

Despite these proofs of concept, the immense computational hardware limitations of the era inevitably led to severe national funding contractions. The Association for the Advancement of Artificial Intelligence (AAAI) held its very first conference at Stanford in 1980, coinciding with the commercial release of the first commercial expert system known as XCON. However, by 1984, the AAAI formally warned of an impending AI Winter. This was a prolonged period where commercial corporate interest and academic funding plummeted due to the failure of symbolic logic systems to meet the lofty expectations set by the initial pioneers.

5. Backpropagation and Deep Learning

A vital but underappreciated mathematical breakthrough occurred unnoticed during these harsh decades. In 1969, researchers Arthur Bryson and Yu-Chi Ho formally introduced backpropagation, a method for optimizing multi-stage dynamic mathematical systems. While originally developed specifically for industrial control systems and largely ignored by the academic community due to extreme hardware constraints, backpropagation eventually became the foundational algorithmic engine required for training massive multilayer neural networks.

The paradigm shifted irreversibly in 2012, marking the beginning of the deep learning renaissance. This global breakthrough was catalyzed by the sudden convergence of massive digital datasets and the fast parallel processing capabilities of modern Graphics Processing Units. By 2016, DeepMind’s advanced AlphaGo demonstrated complex decision-making at an unprecedented scale, defeating human champions in the highly complex, non-linear game of Go. This event successfully moved the technology from simple academic demonstrations to widespread, highly scalable enterprise deployments across the globe.

6. Data Preparation and Cleaning

Modern computational systems operate on a rigorous, multi-stage mathematical pipeline that transitions raw, unstructured data into actionable predictive intelligence. The machine learning lifecycle is divided into specialized, sequential stages, beginning with tedious data collection and intense data preparation. This initial phase can consume up to 80 percent of an entire project’s total timeline.

The initial phase requires directly addressing the inherent messiness of raw data sets. This involves systematically identifying missing values, resolving exact duplicates, and filtering out useless statistical noise. Data scientists must perform robust mathematical imputation for missing fields utilizing mean, median, or model-based estimations. They systematically remove wild outliers utilizing statistical methods such as Z-scores and Interquartile Ranges (IQR). In domains such as computer vision and natural language processing, data augmentation techniques are heavily employed. Following data cleaning, the raw data must be mathematically transformed. Categorical variables are structured via strict one-hot encoding or specific label encoding, and all numerical features are standardized using tools like StandardScaler to ensure that varying magnitudes do not skew the sensitive neural network’s learning gradients.

7. Neural Network Training

The operationalization of neural systems requires a fundamental understanding of the dichotomy separating the training phase and the inference phase. Training is the compute-intensive process where a massive neural network learns by analyzing immense datasets and continuously adjusting its internal mathematical parameters over time. During this phase, data flows through the layered mathematical connections of the dense network in a specific forward pass. Individual artificial neurons assign a specific mathematical weight to the input, and the final output is compared against the correct label to calculate the total mathematical loss function.

Because the algorithm only occasionally receives specific feedback on whether it was correct or incorrect, the calculated mathematical error is rapidly propagated backward through the dense network’s layers in a process famously known as backpropagation. Massive optimization algorithms systematically and iteratively tweak the millions of hyperparameter weights to minimize the total loss function over thousands of computational epochs. Because training is conducted entirely offline and processes massive batch sizes, it tolerates high latency. The total time-to-completion is often measured in long hours, multiple days, or even several expensive months on massive supercomputers.

8. Live Inference

In stark contrast, inference is the fast, practical application of the fully trained model in the real world. Inference uses the finalized, locked neural network to make specific decisions regarding new, unseen inputs. During live inference, the model’s millions of internal mathematical weights remain entirely static. It does not learn or adjust parameters any further, but rapidly applies the patterns it has memorized to output fast classifications, numerical probabilities, or generated text.

Because live inference is typically a user-facing operation integrated directly into live software applications, it demands ultra-low latency, requiring fast responses within milliseconds to maintain system fluidity. Consequently, while massive training requires clusters of specialized compute hardware, live inference demands extreme architectural efficiency and high memory bandwidth, often running smoothly on singular GPUs or specialized edge processors located inside mobile phones.

9. The Transformer Architecture

The contemporary landscape of generative artificial intelligence, particularly Large Language Models (LLMs), is predicated entirely on the Transformer architecture. Formally introduced in the landmark 2017 paper Attention Is All You Need, the Transformer fundamentally resolved the severely restrictive structural limitations of all preceding sequential models. Prior to the Transformer, Recurrent Neural Networks (RNNs) provided a basic equivalent of memory to process sequential data, but they were forced to analyze long inputs word-by-word. This sequential necessity created a massive information bottleneck over long distances and prevented the rapid parallelization of heavy computation.

The Transformer resolved this computational issue by analyzing entire data sequences simultaneously through a sophisticated mathematical framework known globally as self-attention. Because the Transformer processes all sequences in parallel, it inherently lacks an actual understanding of specific word order or standard grammatical sequence. To successfully inject sequential context back into the math, the architecture utilizes Positional Encoding, which systematically adds unique mathematical signals directly to the initial word embeddings.

10. The Attention Mechanism

The self-attention mechanism allows an advanced neural network to weigh the contextual relevancy of every single element in an input sequence relative to every other element, effectively mimicking how the human brain focuses on specific voices in a crowded room while tuning out ambient noise. For each token in an input sequence, the massive model projects the token’s initial numerical embedding into three distinct dimensional vectors by multiplying it against learned weight matrices: the specific Query matrix, the vital Key matrix, and the exact Value matrix.

The alignment score between any two tokens is determined by calculating the dot product of the Query vector of the current token and the Key vector of the target token. The resulting precise alignment scores are rapidly passed through a specific softmax activation function, which maps all inputs into smoothly normalized attention weights between 0 and 1 that collectively sum to 1.0. Finally, these normalized weights are multiplied by their respective Value vectors, producing an output that is a highly contextualized, weighted combination of all the relevant input information.

11. Hardware and the Exascale Era

The unprecedented exponential scaling of algorithmic capabilities is currently bottlenecked entirely by the harsh physical limitations of silicon manufacturing and exact memory bandwidth. With global data center construction projected to approach a staggering $2.9 trillion through 2028, the entire semiconductor industry is experiencing unprecedented capital expenditure.

| Hardware Manufacturer | Flagship Architecture | Primary Market Focus |

|---|---|---|

| NVIDIA | B300 (Blackwell Ultra) | Heavyweight LLM Training |

| AMD | MI325X / MI400 | High-memory Inference |

| TPU v7 (Ironwood) | Integrated Cloud Systems | |

| AWS | Trainium3 | Native AWS Cloud Model Training |

| IBM | Telum II | Financial Mainframes |

NVIDIA currently leads the global chip manufacturing oligopoly, having successfully transitioned from its roots in simple graphics processing to dominate the foundational layer of massive generative infrastructure. By 2026, the entire industry standard is shifting from the old Hopper architecture to the anticipated Blackwell generation. The NVIDIA B300 features an immense 288GB of advanced memory and delivers a staggering 1,100 petaflops of dense inference performance. A single GB300 NVL72 rack-scale system can process over 12,900 tokens per second per GPU.

Conversely, Advanced Micro Devices (AMD) has positioned itself directly as the primary merchant silicon challenger with its powerful Instinct series. The AMD MI325X directly targets memory-bound LLM workloads, boasting a bandwidth of 6 terabytes per second. To reduce dependency on these third-party hardware vendors and dramatically minimize high infrastructure costs, hyperscalers are aggressively developing custom silicon. Google holds a massive 58 percent share of the custom cloud accelerator market through its Tensor Processing Unit (TPU) ecosystem. The 2026 release of the powerful TPU v7 yields a staggering 4,614 teraflops per chip.

12. The Software Ecosystem

The software stack underpinning these massive systems is diverse, with specific programming languages serving distinct roles across the deployment pipeline. Python remains the undisputed foundational language of the global industry primarily due to its clean syntax, versatility, and vast ecosystem of specialized libraries. Python excels at rapid prototyping and acts as the interface for massive deep learning frameworks such as Google’s TensorFlow and Meta’s PyTorch.

However, when these models move from experimental training into production inference, computational efficiency becomes paramount. For these latency-critical enterprise environments, developers rely on fast C++ and secure Java, which provide granular control over memory management and exact execution speed. The software ecosystem is defined by the dynamic friction between proprietary, closed-source models and the rapidly expanding open-source community. While proprietary systems like OpenAI’s GPT-5 offer expert-level performance, open-weight disruptors like Meta’s Llama 4 Scout have fundamentally shifted the global market by democratizing access to massive 10-million token context windows.

13. Agentic AI and Autonomous Workflows

By 2026, the software engineering paradigm has shifted fundamentally away from basic prompt-response models over to the advanced deployment of Agentic AI. Early generative systems functioned as simple wrapper programs, requiring continuous human prompting and heavy oversight. In stark contrast, advanced agentic systems are designed to operate entirely autonomously without human intervention.

These complex agents reason through multi-step technical workflows, independently query complex external APIs, coordinate with other specialized software agents, and write, test, and execute their very own code. Consequently, frameworks enabling multi-agent orchestration, such as AutoGen, CrewAI, and LangChain, have rapidly become the critical enterprise standard used for building systems that independently take autonomous action rather than passively generating text.

14. The Multimodal Frontier

The archaic era of unimodal, text-only intelligence has concluded. The state-of-the-art foundational models of 2026 process, reason, and seamlessly generate across complex text, rich audio, dense image, and long video simultaneously, functioning as deeply integrated, unified systems.

| Foundational Model | Primary Architectural Strength | Context Window Limit |

|---|---|---|

| Claude 4.5 Sonnet | Agentic Coding / Hybrid Reasoning | 1 Million Tokens |

| Gemini 2.5 Pro | Multimodal Integration / Sparse MoE | 1 Million Tokens |

| OpenAI GPT-5 | Unified Routing / Raw Knowledge | 400,000 Tokens |

| Llama 4 Scout | Open-Weight Disruption | 10 Million Tokens |

The fierce competitive landscape is defined by highly specialized mathematical architectures. Anthropic’s Claude 4.5 Sonnet is recognized as the definitive leader in autonomous agentic software engineering. Utilizing a strict safety-first hybrid reasoning approach governed by Constitutional principles, Claude smoothly operates autonomously for long hours, independently resolving over 70.6 percent of massive real-world historical GitHub issues on the brutal SWE-bench Verified scale. Google’s highly sophisticated Gemini 2.5 Pro operates on a complex sparse Mixture-of-Experts (MoE) architecture featuring a massive 1-million token context window, functioning as a thinking model that dynamically routes compute resources.

15. Breakthroughs in Biomolecular Modeling

Beyond advanced foundational reasoning, multimodal capabilities have revolutionized specific complex creative and technical scientific domains. In scientific research, advanced systems have drastically accelerated biomolecular modeling. The highly anticipated AlphaFold 3, developed by Google DeepMind and Isomorphic Labs, aggressively moved far beyond simple single-chain protein folding to jointly predict the physical structures of dense protein-ligand, robust protein-nucleic acid, and tight protein-protein complexes.

By utilizing an updated, advanced diffusion-based architecture rather than relying on slow traditional physics-based tools, AlphaFold 3 successfully achieved unprecedented accuracy in predicting complex drug-like interactions, scoring exactly 50 percent higher on the brutal PoseBusters benchmark. This massive breakthrough enables full-stack pharmaceutical platforms to rapidly design de novo protein binders and synthesize new, advanced drug candidates autonomously.

16. Embodied AI and Robotics

The physical manifestation of artificial intelligence has transitioned rapidly away from rigid, mechanical automation directly into adaptive, intelligent physical machines known famously as Physical AI. Traditional robotics relied heavily on simple, rule-based traditional navigation methodologies. Older line-following robots utilized simple infrared sensors to track high-contrast pathways. These deterministic, pre-programmed approaches failed catastrophically in unstructured, highly dynamic physical environments.

Modern intelligent robots bypass these massive, restrictive limitations by utilizing advanced Sensor Fusion. This mathematical paradigm aggregates and reconciles conflicting data from disparate sensory inputs, such as high-resolution optical cameras, highly accurate LiDAR, deep radar, and sensitive inertial measurement units, to create a cohesive, dynamic perception of the entire operational environment. Furthermore, advanced humanoid robots have successfully hit the mass commercial market, with companies like Boston Dynamics deploying models capable of learning complex physical manual labor tasks in under a single day through advanced spatial reasoning algorithms.

17. Market Value and Monetization

The transition of advanced computational intelligence from experimental academic research directly to enterprise business infrastructure has aggressively triggered an unprecedented global industrial expansion. The global market, estimated at $390.91 billion in 2025, accelerated to $539.45 billion in 2026. Respected market analysts project an astronomical financial trajectory, forecasting the entire sector to reach a staggering $3.49 trillion by 2033.

As the application layer rapidly matures, software vendors are adopting structured, profitable monetization strategies to align massive computational costs with customer value extraction. The baseline model is usage-based pricing, which is a simple pay-as-you-go format where total revenue is generated incrementally via specific API calls, exact documents processed, or pure gigabytes analyzed. Furthermore, mature software vendors are pioneering outcome-based pricing. Under an innovative outcome-based contract, companies charge strictly for tangible business results, such as successfully resolved customer service tickets, directly pairing the software cost with the exact human labor value successfully saved.

18. Disruption in Healthcare

Nowhere is outcome-based disruption more evident than in the complex medical sector. Advanced coding platforms successfully utilize advanced natural language processing to autonomously translate unstructured clinical documentation into strict, standardized ICD and highly accurate CPT billing codes.

| Platform Name | Primary Healthcare Target | Key Differentiators |

|---|---|---|

| Medicodio | General Healthcare Providers | High workflow automation and broad specialty integration. |

| Fathom Health | Large Health Systems | Emphasizes massive volume processing. |

| CodaMetrix | Academic Medical Centers | Designed for complex enterprise deployments. |

| Nym Health | Value-Based Care | Fully autonomous processing with strong audit rules. |

Traditional manual medical coding is a labor-intensive process, famously with up to 80 percent of all medical bills containing severe human errors, resulting in a massive 42 percent of all insurance claim denials. Advanced systems drastically reduce these costly human errors, accelerating revenue cycles for massive, overwhelmed hospital networks.

19. Cybersecurity and Defensive AI

The unprecedented exponential increase in powerful capabilities has fundamentally altered the global cyber threat landscape, effectively democratizing cybercrime. Malicious actors are leveraging massive open-source models to orchestrate hyper-personalized social engineering campaigns at massive scale, easily bypassing old, traditional perimeter network defenses.

To aggressive combat these dangerous machine-speed threats, the global cybersecurity industry has fully integrated advanced defensive algorithms, transitioning toward Continuous Threat Exposure Management (CTEM). The foundational strategy for the 2026 enterprise heavily relies on the strict Zero Trust architecture, which discards the archaic concept of a secure physical perimeter in favor of continuously authenticating every single user behavior.

20. Environmental Sustainability

While the economic benefits are profound, the staggering computational intensity required for successfully training massive models exacts a severe environmental toll. Training a massive frontier model comprising hundreds of billions of mathematical parameters requires continuous processing across massive server farms for several long months, generating an enormous carbon footprint.

The macroeconomic energy forecasts are stark: by 2030, total data center electricity usage in the United States could safely account for 7.4 percent of the total national grid capacity. Beyond direct heavy electricity demands, massive hardware requires vast amounts of fresh, pure natural water to thoroughly cool massive hyperscale data centers.

21. Global Regulatory Frameworks

Recognizing the systemic risks inherent in unregulated models, global governments have abandoned laissez-faire observation in favor of structured governance. The European Union has enacted the strict AI Act, formally representing the world’s most comprehensive risk-based regulatory framework.

While general-purpose guidelines went into legal effect in early 2025, specific compliance obligations for models officially classified as high-risk become legally enforceable in August 2026. The massive global financial penalties for non-compliance are severe, reaching up to €35 million. Meanwhile, the United States has adopted a decentralized, market-driven approach primarily guided by Executive Order 14110, allowing individual states like California to pursue aggressive legislation such as SB-1047.

22. Quantum Integrations

The ultimate theoretical frontier for accelerating processing beyond the physical limitations of traditional standard semiconductors lies precisely in highly advanced Quantum Machine Learning (QML). Quantum computing bypasses classical binary logic by utilizing powerful qubits, allowing complex mathematical optimizations to occur across multidimensional probability states.

In late 2025, hyperscaler Google achieved a verified technical quantum milestone utilizing its newly built superconducting Willow chip. Running the proprietary Quantum Echoes algorithm, the Willow processor successfully simulated complex physical systems 13,000 times faster than the world’s most powerful classical supercomputers. This algorithm achieves this feat by allowing specific quantum states to echo backward in time, successfully reversing decoherence to actively self-correct natural quantum noise during processing routines.

23. The Trajectory Toward AGI

Artificial General Intelligence (AGI), defined simply as an autonomous system capable of surpassing human cognitive performance across all economically valuable tasks, remains the ultimate theoretical objective of leading research laboratories. While early industry consensus predicted that AGI would not emerge until the 2040s, the rapid scaling of agentic systems and multi-modal reasoning has aggressively compressed timelines. Current community insights suggest a 10 percent probability of reaching the AGI threshold by 2026, scaling to a 50 percent probability by 2041. More optimistic projections from industry leaders suggest that advancements in computational power and automated self-improving code generation could push cognitive boundaries past human capabilities as early as 2027.

The societal transition toward AGI introduces massive macroeconomic disruption. By 2030, global demographic macrotrends and system integration are projected to create approximately 170 million new jobs while simultaneously displacing 92 million legacy roles. Knowledge workers, particularly those in administrative, customer support, and basic analytical sectors, face immediate displacement risks. Extensive models predict that up to 70 percent of routine office tasks could be fully automated by the end of the decade, with an estimated 300 million full-time equivalent jobs globally affected by the technology’s widespread adoption. This level of structural displacement has reignited serious policy discussions surrounding Universal Basic Income (UBI) and other socioeconomic safety nets as potential necessities to manage the transition.

The World Economic Forum identifies multiple divergent scenarios for this transition. In the Age of Displacement scenario, rapid exponential advancement collides with a workforce lacking critical skills. Businesses automate roles rapidly to overcome talent shortages, leading to severe unemployment spikes, the collapse of legacy social safety nets, and the extreme concentration of power within a few tech platforms. Conversely, in the Supercharged Progress scenario, widespread proactive workforce readiness initiates the agentic leap. Rather than being universally replaced, the human worker evolves into an agent orchestrator, directing and auditing portfolios of autonomous digital workers to achieve unprecedented productivity. In this optimistic paradigm, the extreme efficiency generated unlocks vast economic abundance, mitigating fears of mass dystopia in favor of a job singularity.