| The Universal Symphony | |

|---|---|

| Earliest Known Instrument | Divje Babe Flute (approx. 43,000 years old) |

| Early Acoustic Pioneer | Pythagoras |

| Core Structural Elements | Melody, Harmony, Rhythm, Timbre |

| Human Hearing Range | 20 Hz to 20,000 Hz |

| Classification System | Hornbostel-Sachs (1914) |

| Highest Music Award | The Grammy Awards |

| Best-Selling Album | Thriller by Michael Jackson |

| Top Streaming Platforms | Spotify, Apple Music, YouTube Music |

| Streaming Market Share | Over 62% globally (2020) |

| Prominent AI Generators | Suno, Udio, AIVA, Mubert |

| Core Educational Methods | Suzuki, Kodály, Orff, Dalcroze, Gordon |

| Spatial Audio Standard | Ambisonics and HRTF |

| Recording Academy | Grammy Official Portal |

| Audio Engineering Society | AES Official Portal |

Music, at its most fundamental structural level, is the deliberate organization of sound and silence across a temporal axis. The architectural integrity of any musical composition is governed by a universal set of core elements that interact to produce meaning and aesthetic value. These elements include harmony, melody, texture, dynamics, pitch, rhythm, timbre, tempo, time, beat, structure, meter, and growth.

The pedagogical approach to music, its underlying acoustic physics, its deep psychological impact on the human brain, and the explosive digital economics surrounding it form a massive, interconnected ecosystem. This comprehensive report breaks down the multidisciplinary dimensions of music, exploring how organized sound shapes human culture, law, mental health therapy, and modern artificial intelligence.

1. Foundations of Music Theory

The foundational elements of music operate on distinct axes, interacting to create the emotional journey of a song.

- Melody: Functions on a horizontal axis, representing a sequence of single notes arranged to create a distinct contour. This contour can rise, fall, arch, or undulate, giving the composition its emotional character as it moves forward in time.

- Harmony: Operates on a vertical axis, occurring when multiple pitches sound simultaneously to construct chords. The interplay of melody and harmony dictates the emotional undercurrent of a piece, creating cycles of tension and resolution.

- Intervals: The distance between these pitches serves as the foundational building blocks of both harmony and melody. Playing two or more notes simultaneously creates harmonic intervals, while playing single notes in a sequence generates melodic intervals.

- Rhythm: The engine of musical momentum. It organizes sounds into temporal divisions, utilizing steady pulses and meters to dictate the pace, duration, and phrasing of the composition.

The pedagogical approach to music theory has undergone a significant paradigm shift in the 21st century. Historically, theoretical instruction was heavily anchored in four-part voice-leading exercises. Modern theoretical analysis now emphasizes motivic development, fragmentation, subphrases, and various forms of melodic alteration. Students are trained to understand how melodies are manipulated through intervallic change, rhythmic augmentation, diminution, ornamentation, extension, and retrograde.

2. Musical Notation and Symbols

To communicate complex acoustic ideas, musicians utilize a highly standardized system of visual symbols and nodes. The absolute foundation of written music is the Staff, which consists of five horizontal lines and four spaces where notes are placed based on their pitch.

Clefs are placed at the beginning of the staff to indicate the pitch range. The Treble Clef (also known as the G-clef) is used for higher-pitched instruments like the violin, flute, or female voice. The Bass Clef (or F-clef) is used for lower-pitched instruments like the cello, tuba, or male voice.

The notes themselves dictate rhythm and duration. A Whole Note looks like an empty oval and represents four beats of sound. A Half Note adds a vertical stem to the oval and represents two beats. A Quarter Note fills in the oval with solid black and represents one beat. A Rest is a symbol used to indicate a specific duration of absolute silence, which is just as mathematically important as the sounding notes.

3. The Physics of Sound

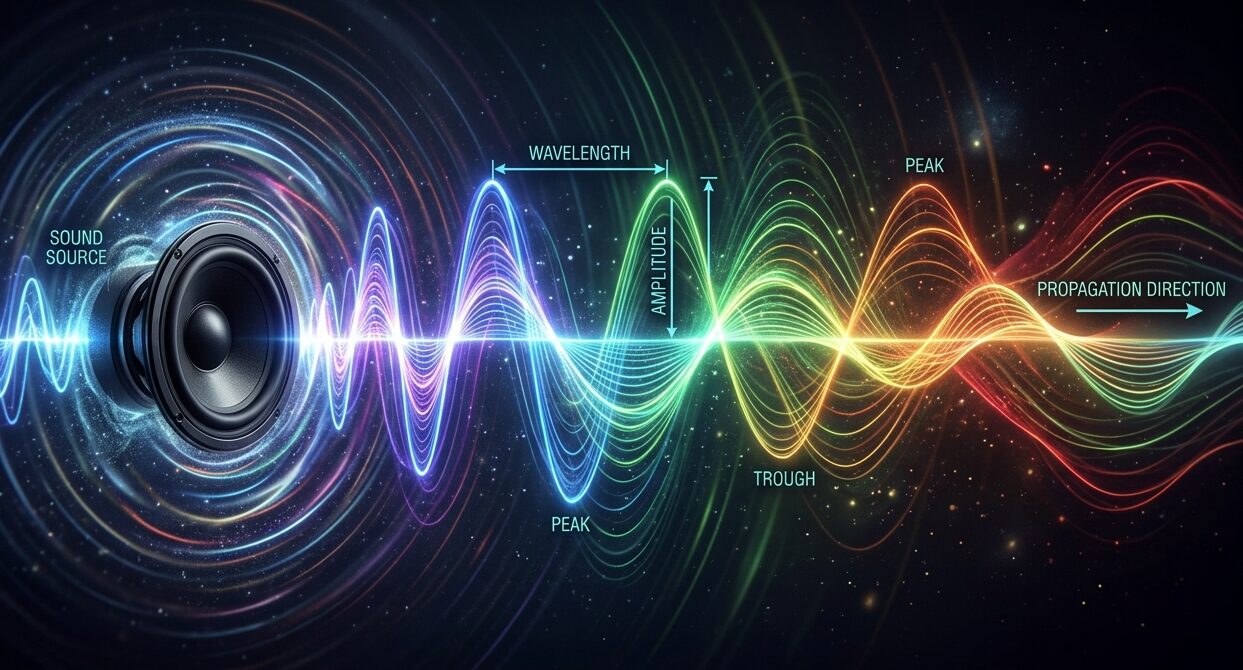

The aesthetic experience of music is intrinsically bound to the empirical laws of physics and acoustics. Sound propagates as a mechanical wave through a physical medium (gas, liquid, or solid), carrying both energy and momentum via the collective excitation and displacement of atoms.

In longitudinal waves, atoms are displaced parallel to the direction of propagation. In transverse waves, they are displaced perpendicularly. The velocity of sound in the air is determined by the medium’s bulk modulus and density, equating to approximately 344 meters per second at sea level. As sound travels, its frequency (measured in Hertz) and wavelength dictate its pitch.

Musical instruments produce sound through resonant frequencies and standing waves. A standing wave is generated by the superposition of left-going and right-going traveling waves reflecting off the boundaries of a system, such as the fixed ends of a stretched string. The fundamental frequency determines the primary pitch, while the complex timbre of the instrument is shaped by its overtones, or harmonic partials.

This deep understanding of acoustic principles has enabled the manipulation of sound through electronic synthesis. Modern digital and analog synthesizers utilize specific mathematical techniques to sculpt audio waveforms, including Subtractive synthesis (filtering a rich waveform), Additive synthesis (combining individual sine waves), and Frequency Modulation (altering pitch with an oscillating modulator).

4. Musical Instruments and Classifications

To organize the immense diversity of global instrumentation, the Hornbostel-Sachs classification system was established in 1914 by Erich von Hornbostel and Curt Sachs. Expanding upon older methods, this system categorizes instruments by their sound-producing materials rather than their cultural prestige, providing a universal framework applicable to any global culture.

| Classification | Mechanism of Action | Examples |

|---|---|---|

| Idiophones | Produces sound through the vibration of rigid, solid materials without the use of membranes or strings. The instrument itself vibrates when struck or shaken. | Xylophones, glockenspiels, cymbals, castanets, and bells. |

| Membranophones | Produces sound utilizing stretched skins or membranes. The membrane is struck, creating resonant vibrations inside a hollow chamber. | Snare drums, timpani, bongos, and kazoos. |

| Chordophones | Produces sound by vibrating strings stretched between two points. The string’s tension, length, and mass dictate the exact pitch. | Guitars, violins, pianos (struck strings), and harps. |

| Aerophones | Produces sound by vibrating a column of air. The player blows air into a tube, and opening or closing holes changes the length of the air column, altering the pitch. | Flutes, trumpets, saxophones, and pipe organs. |

| Electrophones | Produces sound entirely through electronic oscillation and amplification, requiring electrical power to generate a signal. | Synthesizers, theremins, and digital drum machines. |

5. History and Global Genres

The chronological evolution of music mirrors the developmental trajectory of human civilization, serving as an auditory record of societal values, religious shifts, and technological milestones. Tracing back to antiquity, organized musical systems emerged globally, including in the ancient dynasties of China, Greece, India, and the Persian empires.

During the Medieval period (500 to 1400 CE), the Roman Catholic Church dominated European musical output. The church formalized Gregorian chant, a monophonic vocal tradition written down using square notes called neumes. This monophonic tradition gradually evolved into complex polyphony, paving the way for the Renaissance (1400 to 1600). The Renaissance triggered a rebirth of secular complexity, greatly accelerated by the 1454 invention of the Gutenberg printing press, which democratized musical notation.

This era gave way to the highly ornamented Baroque music period, the structurally rigorous Classical period, and the emotionally expansive Romantic period. The 20th and 21st centuries splintered into diverse intellectual movements, while an explosion of recorded popular genres like blues, jazz, rock, and pop emerged, driven by broadcasting technologies.

6. Famous Personalities in Music History

The evolution of global music is punctuated by legendary personalities who radically altered the trajectory of composition and performance.

- Wolfgang Amadeus Mozart: A prolific child prodigy of the Classical era, Mozart composed over 600 works. He is celebrated for his unparalleled melodic genius and for formalizing the structures of the symphony and the piano concerto.

- Ludwig van Beethoven: A crucial transitional figure between the Classical and Romantic eras. Despite severe hearing loss, Beethoven expanded the scope of the symphony, injecting profound personal emotion and aggressive dynamics into works like his monumental Ninth Symphony.

- Miles Davis: A revolutionary American jazz trumpeter, bandleader, and composer. He was instrumental in the development of bebop, cool jazz, hard bop, and jazz fusion, fundamentally shifting the landscape of 20th-century instrumental music.

- Michael Jackson: Dubbed the “King of Pop”, he transformed the music video into a profound cinematic art form and revolutionized global pop music production, choreography, and racial integration on television networks like MTV.

7. Cognitive Neuroscience and Psychology

The human brain’s response to music is an intricate, multi-regional symphony that engages virtually the entire neural architecture, far exceeding simple auditory processing. Human auditory perception ranges from 20 Hz to 20,000 Hz. The perception of pitch and sound information is predominantly processed in the primary and secondary auditory cortices. This area features a highly organized tonotopic map where specific frequencies resonate at precise locations on the basilar membrane.

Music perception relies on two primary neural streams. The ventral stream (involving the auditory cortex and medial temporal lobe) is focused on connecting sound to semantic meaning. The dorsal stream matches auditory input with motor actions, heavily engaging the basal ganglia and supplementary motor area to process rhythm, beat perception, and motor planning.

A profound insight into musical cognition is revealed by the predictive coding theory. Magnetoencephalography studies demonstrate that the brain actively anticipates musical sequences. If a variation or error occurs in the expected sequence, it triggers a conscious prediction error originating in the auditory cortex. This highlights that musical enjoyment is heavily reliant on the brain’s continuous, subconscious calculation of probability and expectation.

8. Music and Mental Health Therapy

The profound emotional resonance of music is regulated by the limbic and paralimbic systems. Because the medial prefrontal cortex (a region linked to autobiographical memory and musical familiarity) is one of the last areas to degenerate in neurodegenerative diseases like Alzheimer’s, music therapy has emerged as a potent clinical and psychological intervention.

Passive listening to favored music triggers spontaneous activation in the amygdala and the ventromedial prefrontal cortex, heavily implicating the brain’s dopaminergic reward pathways. This dopamine release actively lowers cortisol levels, making music an incredibly effective, non-pharmacological treatment for severe anxiety, clinical depression, and chronic stress.

Active music therapy yields even greater clinical results. Systematic reviews of randomized controlled trials indicate that interventions such as rhythmic auditory stimulation and group singing can significantly improve global cognition, verbal fluency, and the speed of information processing in patients with Alzheimer’s disease and Parkinson’s disease. By matching physical motor movements to a steady, externally provided musical beat, Parkinson’s patients can temporarily bypass damaged neural pathways and regain the ability to walk smoothly without freezing.

9. Social and Cultural Impact

On a macroscopic level, music functions as a vital sociological infrastructure, facilitating social cohesion, transmitting cultural values, and igniting ideological movements. As sociologist Paul Honigsheim observed, music is the most universally beloved and social of the arts, dependent on the combined effort of performers and audiences.

In religious contexts, music serves as an essential aid to worship. The Feedback Loop Model of worship psychology suggests that music facilitates a profound sense of divine presence. Communal musical experiences (such as singing, drumming, or dancing) amplify emotional pleasure and promote prosocial behavior through synchronized action. This phenomenon, known as synchronous arousal, strengthens social bonds regardless of overt physical coordination.

Historically, music has catalyzed sociopolitical transformation. During the 1960s Civil Rights Movement, church-based hymns like “We Shall Overcome” were adapted, complemented, and fused with popular music styles to create a unifying acoustic banner that bridged generational and racial divides.

10. Music in Media and Storytelling

Music is an indispensable pillar of narrative storytelling in multimedia formats, functioning as a psychological guide that dictates emotional pacing, world-building, and thematic depth. The American musical theater represents a highly evolved, integrated synthesis of dialogue, song, and dance. The genre matured into the integrated “book musical” in the mid-20th century, introducing the “I want” song, positioned early in the narrative to reveal the protagonist’s primary motivation.

In cinema, the function of the soundtrack evolved rapidly. The Golden Age of Hollywood film scoring was defined by composers adapting Richard Wagner’s leitmotif technique. This technique assigns specific musical phrases to characters or themes to subconsciously manipulate audience emotion and anticipation, famously utilized by John Williams in the movie Jaws.

The advent of video games introduced a radical new narrative paradigm: interactivity. Early video game music was heavily constrained by monophonic hardware limitations, giving birth to the enduring “chiptune” aesthetic. In contemporary titles, music dynamically shifts based on player choices using procedural, reactive systems. Video games have also evolved into platforms for virtual concerts, transforming the listener from a passive consumer into an active participant in an immersive, audiovisual narrative.

11. Music Education and Pedagogy

The transmission of musical knowledge and the cultivation of musical appreciation rely on deeply structured pedagogical philosophies. In the 20th century, several pioneering methodologies emerged, often referred to as the “Big Five”.

| Pedagogical Method | Founder / Origin | Core Philosophy and Instructional Techniques |

|---|---|---|

| Dalcroze Eurhythmics | Émile Jaques-Dalcroze | Emphasizes the human body as the primary instrument. Translates human emotion and musical elements into kinesthetic movement. |

| Kodály Method | Zoltán Kodály | Focuses heavily on music literacy and “inner hearing”. Utilizes traditional folk songs, movable-Do solfège, and Curwen hand signs. |

| Orff Schulwerk | Carl Orff | Centers on process, exploration, and play. Integrates speech, drama, body percussion, and a specific instrumentarium (xylophones, glockenspiels). |

| Suzuki Method | Shinichi Suzuki | The “mother-tongue” approach. Operates on the premise that children learn music identically to language through early, immersive listening and repetition. |

| Gordon Music Learning | Edwin Gordon | Concentrates heavily on “audiation”, the cognitive process of hearing and comprehending music in the mind when the sound is not physically present. |

To combat the structural marginalization of non-Western traditions, modern educators are increasingly adopting Culturally Sustaining Pedagogy, which integrates kid culture, such as video game soundtracks and digital media dances, directly into the classroom to increase student agency and cultural literacy.

12. Archiving and Audio Preservation

The preservation of acoustic history relies entirely on the ability to capture the ephemeral nature of sound onto physical mediums, a process that has evolved through four distinct eras: the Acoustic era, the Electrical era, the Magnetic era, and the Digital era. The invention of the phonautograph by Édouard-Léon Scott de Martinville in 1857 initiated the ability to freeze audio in time.

Modern archiving is a highly specialized discipline requiring precise methodologies to combat degradation. Professional preservation standards dictate that analog playback machines must be meticulously aligned to original recording levels. Furthermore, any proprietary analog noise reduction formats must be properly decoded before digital capture. Organizations such as the Audio Engineering Society (AES) actively work to establish these critical technical standards.

13. Music Industry Economics

The economic architecture of the modern music industry has been entirely restructured by the advent of digital technology. However, the prevailing per-stream royalty payments are so minuscule that the model is largely unsustainable for unsigned or mid-tier performers, leading many to abandon the profession entirely.

This pervasive economic precarity has catalyzed a radical financialization of the industry: the assetization of music copyrights. Driven by Royalty Sharing Marketplaces, future music earnings are securitized and fractionalized into investment assets traded publicly on the internet. In this system, investors purchase portions of a catalog’s future royalties, viewing them as “uncorrelated assets” that generate revenue regardless of macroeconomic conditions like federal interest rates.

14. Streaming Apps and Platforms

The distribution of global music is now almost entirely controlled by massive Music Streaming Platforms (MSPs). These applications have democratized access to the world’s entire audio library, accounting for over 62% of global music revenues by the start of this decade.

- Spotify: The undisputed global market leader. Spotify is famous for its highly advanced algorithmic discovery playlists (like Discover Weekly) and its massive expansion into podcasting and audiobooks.

- Apple Music: Deeply integrated into the iOS ecosystem. It champions high-fidelity audio by offering lossless streaming and specialized spatial audio formats powered by Dolby Atmos.

- YouTube Music: Leverages the largest video catalog on earth to provide rare live performances, unauthorized remixes, and covers that are entirely unavailable on traditional audio-only platforms.

- Tidal and Amazon Music: These platforms cater specifically to audiophiles by offering ultra-high-resolution, master-quality audio streams designed for high-end studio equipment.

15. Copyright and Legal Frameworks

The legal framework governing this ecosystem is equally fraught, heavily focused on the practice of sampling and copyright infringement. Current U.S. copyright law requires the clearance of two distinct copyrights for sampling: the master sound recording (owned by the artist or label) and the underlying composition (owned by the songwriter or publisher).

While the Biz Markie case firmly established that simple, uncleared commercial sampling constitutes outright infringement, defense mechanisms exist. In the United States, the de minimis doctrine allows for minor sampling without infringement, provided the average audience cannot recognize the appropriation. Conversely, the landmark 2015 Blurred Lines ruling severely tightened intellectual property enforcement. The court held that infringement could be proven based on a song’s “feel” or “groove,” rather than literal melodic or lyrical copying. This ruling generated a massive chilling effect across the industry, forcing contemporary creators to navigate a minefield where cultural homage can easily be penalized as financial theft.

16. Global Music Awards

Excellence in the music industry is recognized by a series of highly prestigious global awards, providing artists with critical commercial validation and historical prestige.

- The Grammy Awards: Presented by the Recording Academy in the United States, this is widely considered the absolute highest peer-voted honor in the global music industry, recognizing outstanding achievement across all genres.

- The Brit Awards: Hosted by the British Phonographic Industry, this is the highest-profile music award in the United Kingdom, recognizing massive domestic and international commercial success.

- Billboard Music Awards: Unlike peer-voted ceremonies, Billboard awards are based purely on quantitative commercial performance, including album sales, digital downloads, radio airplay, and streaming metrics.

- Eurovision Song Contest: A massive international songwriting competition that draws hundreds of millions of viewers across Europe and the globe, famous for launching the careers of iconic acts like ABBA and Celine Dion.

17. Guinness World Records in Music

In terms of extraordinary historical achievements, the Guinness World Records tracks monumental milestones that define the peak of commercial and physical musical success.

The best-selling album of all time remains Michael Jackson’s Thriller, with estimated global sales exceeding 70 million copies. The record for the most streamed song in human history on Spotify is currently held by The Weeknd’s track “Blinding Lights”. In the realm of physical endurance, the record for the longest official concert ever performed by a solo artist was achieved by Chilly Gonzales, who played the piano continuously for 27 hours, 3 minutes, and 44 seconds in Paris.

18. Future Horizons: AI and Spatial Audio

The trajectory of musical creation and consumption is currently undergoing a violent acceleration due to unprecedented advancements in generative Artificial Intelligence (AI), algorithmic composition, and spatial audio environments.

Machine learning and generative AI systems have transitioned from experimental novelties to practical, highly sophisticated tools. Modern platforms like Suno AI and Udio utilize complex neural networks to generate fully synthesized, high-fidelity music from simple text prompts, fundamentally altering the role of the human producer. However, empirical research reveals a psychological phenomenon termed “narrative listening” (where a listener organically imagines a story unfolding) is significantly diminished when audiences believe the music is AI-generated rather than human-composed.

Simultaneously, the physical playback and consumption of audio is being revolutionized by spatial audio and object-based rendering. Moving beyond traditional channel-based constraints, spatial audio treats individual sound sources as independent objects that can be freely mapped and manipulated within a three-dimensional acoustic space. Technologies relying on Head-Related Transfer Functions (HRTF) and first-order Ambisonics mimic the complex psychoacoustics of human hearing, delivering highly immersive binaural experiences that place the listener directly inside the recording studio.