| Meta Smart Glasses Ecosystem | |

|---|---|

| Primary Developer | Meta Platforms, Inc. |

| Primary Hardware Partner | EssilorLuxottica (Ray-Ban, Oakley) |

| Initial Launch Date | September 2021 |

| Global Market Share | 82% (End of 2025) |

| Primary Processing Chip | Qualcomm Snapdragon AR1 Gen 1 |

| Display Technology | 600×600 LCoS Geometric Waveguide |

| Biometric Input | Neural Band (Surface EMG) |

| Primary AI Engine | Llama 4 (Multimodal) |

| Peak Display Brightness | 5,000 Nits |

| Major Legal Dispute | Solos Technology (Patent Infringement) |

| Primary Regulatory Blockade | EU Battery Regulation |

| Top Competitors | Apple (N50), Samsung and Google (Android XR) |

| Meta Reality Labs | Reality Labs Portal |

| Hardware Partner | EssilorLuxottica Corporate |

Smart glasses represent a revolutionary class of wearable computing devices that seamlessly integrate artificial intelligence, advanced optical displays, spatial microphones, and biomechanical sensors directly into traditional eyewear frames. Developed primarily by Meta Platforms through its highly funded Reality Labs division, these devices represent the absolute forefront of the modern ambient computing paradigm. By fusing digital overlays with the physical environment, these wearables allow users to engage with complex software systems without looking down at a mobile phone screen.

Historically, the smart glasses hardware category suffered profound commercial failures. Products like the original Google Glass faced acute social friction, massive privacy backlash from the general public, and a severe lack of compelling daily utility. Meta successfully bypassed these historical bottlenecks through a highly calculated, bottom-up hardware strategy. By partnering with legacy fashion brands and prioritizing aesthetic integration over bulky augmented reality technology, Meta transformed basic wearable cameras into highly complex, multimodal AI assistants capable of continuous environmental analysis, visual indexing, and real-time linguistic translation.

Today, these devices are fundamentally altering the revenue composition of the global extended reality hardware market. This massive, unabridged report provides an exhaustive examination of the entire Meta smart glasses ecosystem. We will deeply detail its extensive historical partnerships, advanced optical working principles, the specific evolution of its entire product portfolio including new athletic and optical variants, its explosive financial market impact, and the increasingly complex labyrinth of privacy regulations and intellectual property litigation governing its global deployment.

1. The Ambient Computing Shift

The trajectory of human-computer interaction is currently undergoing its most significant paradigm shift since the transition from desktop computing to mobile telephony. Over the past half-decade, the technology sector has decisively pivoted away from immersive, isolating virtual reality environments. The new frontier is ambient, socially integrated augmented reality and artificial intelligence wearables designed to exist entirely in the background of everyday life.

At the vanguard of this structural transformation is Meta Platforms. Through a multi-billion-dollar recalibration of its Reality Labs division, Meta established the foundational architecture for smart eyewear. Rather than engineering a bulky augmented reality display and attempting to force it into an uncomfortable wearable form factor, Meta utilized a historically validated fashion silhouette. They embedded the maximum allowable technological payload without compromising the frame’s weight, acoustic properties, or visual aesthetic, achieving an unprecedented level of commercial success and societal acceptance that eluded previous hardware generations.

2. Strategic Eyewear Partnerships

The genesis of Meta’s dominance in the wearable sector is inextricably linked to its strategic manufacturing alliances. Recognizing that wearable technology is inherently a fashion acquisition for the end consumer, Meta sought to entirely offload the aesthetic and optometric engineering to an established global market leader rather than attempting to build a fashion brand from scratch.

The foundational partnership between Meta and EssilorLuxottica, the massive Franco-Italian eyewear conglomerate that owns Ray-Ban, Oakley, and LensCrafters, was publicly announced on September 20, 2020, by Chief Executive Officer Mark Zuckerberg. EssilorLuxottica contributed its unparalleled global retail network, vast distribution channels, and decades of optical engineering expertise. Meta supplied the complex computational infrastructure, the localized edge processors, and the artificial intelligence software layers. This joint venture proved so commercially viable that on September 17, 2024, the two entities announced a formal extension of their partnership into the next decade. By 2025, the collaboration successfully expanded beyond the classic Ray-Ban brand to include Oakley, specifically targeting the highly lucrative high-performance athletic demographic.

3. The Solos Patent Lawsuit

The rapid commercialization and massive projected revenue of the Meta and EssilorLuxottica partnership have predictably attracted aggressive intellectual property litigation from legacy inventors. The most significant legal threat stems from a multi-billion dollar patent infringement lawsuit filed by Solos Technology, a Hong Kong and Massachusetts-based pioneer in the specialized smart eyewear space.

Solos alleges that the foundational architecture of the Ray-Ban Meta glasses directly infringes upon their protected patents regarding multimodal sensing, sensor fusion, spatial audio processing, directional beamforming, and intelligent assistant integration frameworks. The expansive lawsuit traces the origin of the alleged intellectual property theft back to 2015, claiming that Solos explicitly introduced Oakley executives to their advanced prototype technologies during confidential meetings. Furthermore, the complaint asserts that a Meta product manager, previously an MIT Sloan Fellow, had authored a detailed 2021 research study titled Audio Wearable Product Strategy: Expanding User Experience for Solos Smart Glasses before subsequently joining Meta’s Reality Labs division.

Solos contends that by the time Meta began commercializing their smart glasses in late 2021, they had accumulated years of deep, direct knowledge of Solos’ proprietary technology. With the smart glasses product category projected to generate upwards of 10 billion dollars in revenue by 2030, Solos is seeking substantial financial restitution and has filed injunctions attempting to immediately halt global sales. Should Solos prevail in federal court, Meta could face incredibly costly licensing agreements or severe global supply chain disruptions that would paralyze their hardware division.

4. LCoS and Waveguide Optics

The engineering architecture of Meta’s smart glasses represents an absolute masterclass in extreme hardware miniaturization. A vital differentiator in later generations, specifically the Meta Ray-Ban Display released in 2025, is the implementation of a high-efficiency visual interface embedded directly within the right lens of the frame without adding excessive weight or bulk.

While competitors frequently utilize diffractive waveguides paired with Micro-OLED displays, Meta opted for a highly specialized geometric waveguide architecture utilizing liquid crystal on silicon (LCoS) projection technology. Developed in close collaboration with the Israeli augmented reality optics firm Lumus, and utilizing highly specialized Schott glass substrates, this geometric waveguide employs partially reflective micromirrors embedded deep within the lens structure. When the LCoS microdisplay projects an image into the edge of the lens, the light bounces through the waveguide and is directed outward by the microreflectors to form a floating, full-color holographic image directly below the user’s natural eyeline.

This deliberate architectural trade-off prioritizes maximum optical efficiency and social acceptability over an expansive field of view. Diffractive waveguides often suffer from significant forward light leakage, creating a highly unnatural, glowing cyborg-like aesthetic that repels fashion-conscious consumers. Geometric waveguides severely reduce this outward light leakage. The resulting internal display achieves an astonishing 5,000 nits of peak brightness, rendering text and navigation prompts perfectly legible even in direct, harsh sunlight. Operating at a 600×600 resolution running at 90Hz within a narrow 20-degree field of view, the device is explicitly designed for contextual micro-interactions rather than immersive, world-blocking spatial computing experiences.

5. Neural Bands and EMG Input

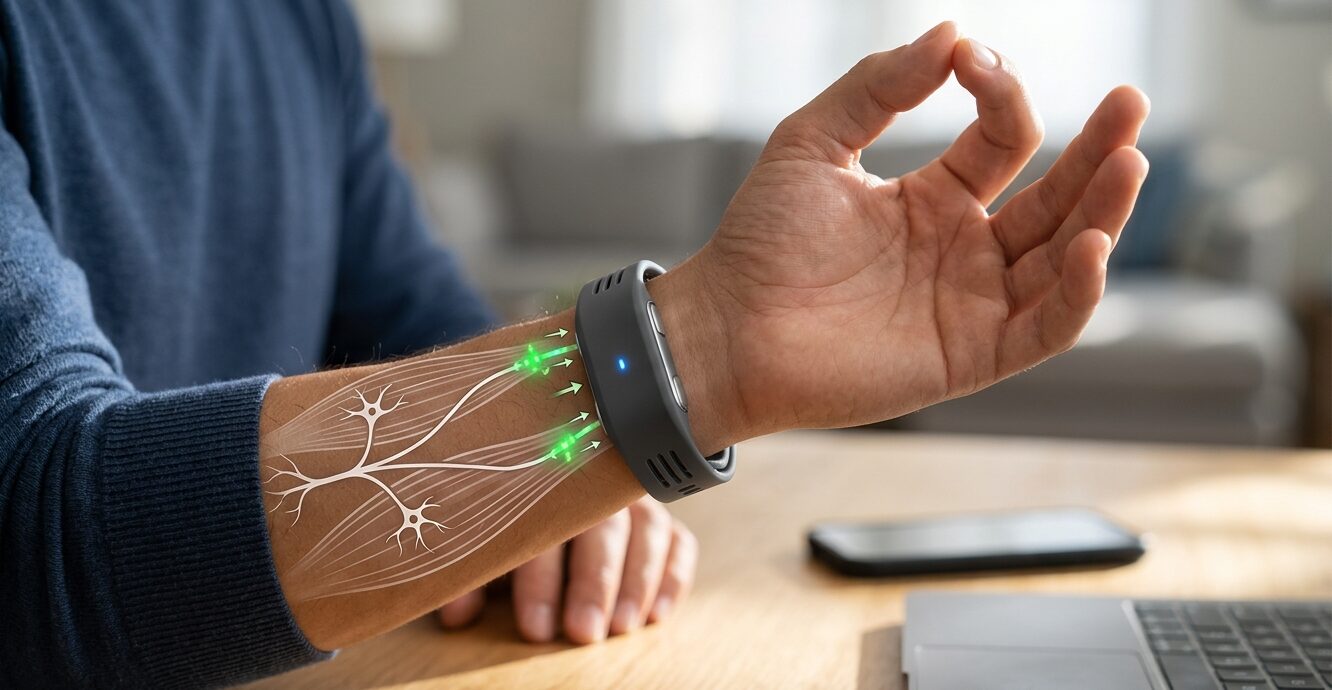

The historical bottleneck in the widespread consumer adoption of head-mounted displays has always been the absence of an intuitive, socially discreet input mechanism. Voice commands are frequently inappropriate or highly disruptive in public transit or professional office settings, while complex optical hand-tracking consumes substantial battery power and requires the user to raise their hands awkwardly into the camera’s field of view. To resolve this fundamental usability flaw, Meta introduced the Meta Neural Band in September 2025.

The Neural Band operates on the sophisticated biological principles of surface electromyography (EMG). The wrist-worn device utilizes a highly dense array of non-invasive sensors worn tightly against the skin to detect the minute electrical signals generated by motor neurons as they instruct muscles and tendons in the hand to contract. Because the system measures the bio-electrical intent traversing the peripheral nervous system rather than relying on a camera tracking physical movement, it can translate incredibly subtle micro-gestures into discrete digital commands, such as a thumb and index finger pinch, a slight wrist flick, or even the neural signature of typing a specific letter on an imaginary keyboard.

The physical construction of the band prioritizes extreme daily durability, incorporating Vectran, a high-performance textile famously utilized in Mars Rover landing systems to prevent tearing. Optimal sensor contact is absolutely mandatory for accurate bio-signal detection. Thus, the band is manufactured in three distinct sizes and requires a professional, in-person fitting at authorized retail locations to ensure the sensors align perfectly with the user’s specific anatomy. Accompanied by integrated accelerometers and gyroscopes, the computational module on the wrist processes these complex biological signals locally, transmitting the interpreted commands via Bluetooth 5.3 to the glasses in milliseconds.

6. Edge Computing and Cloud AI

The software backbone animating the smart glasses hardware relies heavily on a specialized hybrid computational architecture combining on-device processing, high-speed smartphone connectivity, and massive cloud-based AI server clusters. The system must constantly navigate extreme thermal constraints and the severe battery limitations inherent to a sub-50-gram wearable device resting on a human face.

To achieve this delicate balance, routine and immediate tasks such as audio playback, basic high-resolution photo capture, and continuous wake-word recognition are processed entirely locally on the edge via the highly efficient Qualcomm Snapdragon AR1 Gen 1 processor. However, by late 2025 and early 2026, the ecosystem evolved into an ambitious new paradigm known as Continuous Vision. Utilizing advanced self-supervised learning vision backbones like DINOv3 and V-JEPA 2, the AI assistant can maintain an active, unblinking vision session for extended periods, analyzing the user’s surroundings without needing explicit verbal prompts.

Heavy computational workloads, such as complex real-time language translation, dense object indexing for memory retrieval, and intensive generative tasks, simply cannot be handled by the glasses alone. These tasks are seamlessly offloaded to Meta’s server infrastructure via the paired smartphone’s 5G or Wi-Fi 6E connection. This sophisticated hybrid architecture ensures sub-second latency for local physical commands while permitting complex, data-heavy cloud-based AI reasoning to return answers to the user’s ear within a highly acceptable three-second response window.

7. The Meta AI Ecosystem

The vital bridge between the wearable hardware and the massive cloud infrastructure is the companion smartphone application. Originally launched as the basic Facebook View app, the software underwent a total architectural overhaul in April 2025, relaunching as the incredibly robust, standalone Meta AI App. This monumental transition was catalyzed by the integration of the powerful Llama 4 large multimodal model and the Muse Spark framework originating from the highly secretive Meta Superintelligence Labs.

The Meta AI App serves as the central nervous system for managing up to seven paired devices simultaneously. The app features advanced full-duplex speech technology, allowing for incredibly natural, interruptible conversations where the user can speak over the AI without requiring the user to constantly repeat the initial wake word. The software ecosystem enables an expansive suite of advanced functional features that fundamentally change how users interact with the digital world.

| Software Feature | Description and Utility | Processing Layer |

|---|---|---|

| Continuous Vision | Allows the glasses to maintain active observation for context-aware queries based on the user’s immediate physical surroundings, providing proactive suggestions. | Cloud (via 5G tether) |

| Neural Handwriting | Translates EMG wrist micro-gestures into text, integrating directly with iMessage, WhatsApp, and Messenger to allow for completely silent typing during meetings. | Edge (Neural Band) |

| Be My Eyes Integration | Connects visually impaired users with sighted volunteers via live POV video streaming, allowing the volunteer to provide real-time navigational assistance safely. | Cloud and Smartphone |

| Memory Indexing | Allows the AI to actively catalog visual data over time to retrieve lost items based on past video captures, searching vast personal visual databases in seconds. | Cloud Infrastructure |

| Live Translation | Real-time, back-and-forth verbal or text-based translation supporting over 20 languages including Mandarin, Korean, and Arabic, projecting text onto the display. | Cloud (Llama 4) |

8. Athletic Software Integrations

To thoroughly complement the rugged, sports-focused Oakley Meta Vanguard frames, Meta has introduced deep software integrations with major health platforms including Garmin, Strava, and Apple Health. Athletes can use natural voice commands to ask Meta AI to create highly complex custom workouts, such as verbally requesting a 1-hour interval bike ride targeting 20 mph, and subsequently receive real-time performance insights, heart rate data, and pacing metrics directly through the directional open-ear speakers while they train without ever breaking their stride.

9. Generation 1 and 2 Hardware

The iterative development of Meta’s smart glasses demonstrates a clear, highly accelerated trajectory from simple wearable cameras to sophisticated, indispensable AI hubs. Meta has rapidly expanded its product line to encompass both high-fashion lifestyle aesthetics and rugged performance athletic segments to capture a massive demographic range.

Generation 1: Ray-Ban Stories (2021) served as Meta’s foundational proof-of-concept for the modern era. Originally launched at $299, it successfully embedded dual 5-megapixel cameras and functional open-ear speakers into a familiar, non-threatening frame. As of 2026, the Gen 1 models have been heavily depreciated in the market, currently trading for a highly accessible $224 on secondary and discount retail markets.

Generation 2: Ray-Ban Meta (2023) represented a massive hardware overhaul that legitimized the category. The standard AI-integrated glasses are available in classic Wayfarer, Headliner, and Skyler styles. These baseline frames start at $379, with prices scaling upward for premium translucent frame choices or photochromic Transitions lenses. The camera system was significantly upgraded to a 12-megapixel ultra-wide lens, allowing for high-fidelity, highly stabilized 3K video capture.

In 2025, Meta aggressively expanded this technology into the Oakley brand to capture the active lifestyle demographic. The Oakley Meta HSTN features a highly aggressive, sport-inspired frame design priced around $499. For extreme weather conditions and intense physical activity, the Oakley Meta Vanguard is a high-performance athletic model featuring a stringent IP67 water and dust resistance rating, alongside highly advanced aerodynamic wind-noise reduction arrays, also priced at $499.

10. Generation 2 Optics (Launched April 2026)

By April 2026, the product lineup expanded significantly with the introduction of the highly anticipated Generation 2 Optics series, designed specifically to capture the massive demographic of daily prescription glasses wearers. The Ray-Ban Meta Blayzer Optics is a rectangular, incredibly lightweight, and slimmer frame explicitly optimized for heavy prescription wearers and all-day comfort, priced at $499. Its counterpart, the Ray-Ban Meta Scriber Optics, features a rounded frame with highly customizable interchangeable nose-pads and robust overextension hinges to comfortably accommodate wildly diverse facial structures and prevent fatigue, also priced at a premium $499.

11. The Heads Up Display Era

Announced at the highly publicized Meta Connect conference and officially launched to the public on September 30, 2025, the Meta Ray-Ban Display represents the absolute pinnacle of the company’s currently available consumer technology. Priced at a steep premium of $799, this highly advanced model successfully bridges the gap between basic screenless smart glasses and fully immersive, heavy AR headsets.

Featuring the highly efficient full-color 600×600 LCoS geometric waveguide display built directly into the right lens, the glasses allow users to view real-time language translations, glance at turn-by-step pedestrian navigation prompts, and interact with dynamic visual AI widgets without ever breaking eye contact with their physical environment. The Display glasses are bundled exclusively with the Meta Neural Band to guarantee a completely silent, hands-free operational loop in public spaces. Due to severe manufacturing complexities and low initial optical yields, the Meta Ray-Ban Display remains an incredibly exclusive product, restricted solely to the United States market and requiring a mandatory in-person fitting appointment to ensure perfect optical alignment.

12. Future Models: Generation 3

Looking forward, Meta is actively preparing its highly anticipated third generation of smart glasses to maintain its dominant market position against incoming competitors. Operating under the highly secretive internal codenames Aperol for the sunglass variants and Bellini for the classic optical frames, these Gen 3 models are expected to launch around the Meta Connect 2026 conference or early 2027. They will feature massively advanced AI memory assistance, highly enhanced real-time object recognition algorithms, and significantly improved battery life utilizing next-generation high-density power cells.

13. Internal Prototypes: Project Orion

Concurrently, Meta continues intensive internal development on the mythical Project Orion (Artemis). Orion represents true, fully immersive holographic augmented reality packed into a thick but wearable frame. With a massive diagonal field of view of 70 degrees and weighing less than 100 grams, Orion utilizes incredibly exotic silicon carbide materials to channel light with zero distortion. This device is currently not available for retail sale and is only being offered to a tightly controlled, small group of internal developers, as each single prototype unit costs an estimated $10,000 to produce due to the immense difficulty of manufacturing the silicon carbide lenses.

14. Financials and Market Dominance

The aggressive corporate pivot toward artificial intelligence and wearable computing, initially met with profound skepticism and massive sell-offs by Wall Street analysts, has yielded historically unprecedented financial recoveries for Meta Platforms. Trading near a staggering $674 per share in mid-2026, the company boasts a colossal market capitalization of $1.71 trillion. Meta closed the highly successful 2025 fiscal year generating an astonishing $200.97 billion in total global revenue.

The most profound structural shift occurred inside the notoriously unprofitable Reality Labs division. In a monumental watershed moment for the spatial computing industry, the 2025 fiscal data revealed that smart glasses revenue of $2.15 billion fundamentally eclipsed Quest VR hardware revenue of $660 million for the very first time in the division’s history, proving that ambient AR is the financially superior path forward.

| XR and Spatial Computing Platform | 2025 Estimated Hardware Revenue | Lifetime Estimated Revenue |

|---|---|---|

| Meta Reality Labs (Smart Glasses Only) | $2.15 Billion | $3.5 Billion |

| Meta Reality Labs (Quest Hardware Only) | $660 Million | $7.5 Billion |

| PlayStation VR1 and VR2 | $700 Million | $3.6 Billion |

| Apple Vision Pro | $297 Million | $1.66 Billion |

| XREAL | $145 Million | $335 Million |

According to comprehensive global shipment data compiled by IDC and Counterpoint Research, Meta captured a staggering 82% of the global smart glasses market share by the end of 2025. Industry analysts uniformly forecast that the global AI smart glasses market will enter a massive, parabolic breakout phase in 2026, quadrupling in revenue as sales volumes leap to 20 million units globally, expanding the total wearable market value to a massive $5.6 billion.

15. Record-Breaking Sales

Despite the ongoing, highly publicized privacy controversies and legal battles, the hardware devices are a massive, undeniable commercial success. In their incredibly bullish Q4 2025 earnings report, EssilorLuxottica officially confirmed they sold an astonishing 7 million smart glasses in 2025 alone. This phenomenal, unexpected volume brings the total estimated lifetime sales of the wearable devices to around 9 million units globally, cementing the product category as a mainstream consumer staple rather than a niche tech novelty.

16. The Global Competitor Landscape

As Meta aggressively proves the financial viability of the smart glasses market and generates billions in highly coveted hardware revenue, the competitive landscape has rapidly expanded to include the world’s most powerful technology conglomerates.

Apple Inc. is aggressively developing its own sophisticated smart glasses, internally codenamed Project N50. Following the mixed, highly critical commercial reception of the incredibly expensive Vision Pro headset, Apple rapidly pivoted toward a lightweight wearable tightly integrated with the iPhone ecosystem. Analysts expect Apple to heavily leverage the highly capable Siri assistant and Apple Intelligence to handle complex queries. Given the massive privacy controversies plaguing Meta, industry experts heavily speculate Apple may initially omit external cameras entirely to completely outmaneuver Meta in consumer trust and privacy optics.

17. Fierce Upcoming Competition

The global technology industry has acutely noticed Meta’s massive 82% market share, and heavy hitters are aggressively entering the space to shatter that hardware hegemony. Apple is reportedly testing four radically different frame designs for its own smart glasses, targeting a highly anticipated 2026 or 2027 release date. Meanwhile, massive tech titans Samsung and Google are deeply collaborating on Galaxy AI Smart Glasses powered by a highly specialized new Android XR operating system, which are expected to launch in late 2026. Xiaomi also recently launched its Mijia Smart Audio Glasses to capture the budget demographic, which weigh a mere 27.6 grams and feature a highly advanced special privacy mode algorithm to limit sound leakage to bystanders in quiet environments.

18. European Union Regulations

Beyond the fierce corporate competition and massive intellectual property disputes, the most formidable barrier to Meta’s continued global expansion lies in the labyrinthine, highly punitive regulatory frameworks governing data privacy and hardware sustainability in Europe.

The most glaring geographic omission in Meta’s massive global rollout strategy is the European Union. Despite immense consumer demand and a massive potential user base, the premium Meta Ray-Ban Display model remains conspicuously absent from European retail channels. This total blackout is the direct result of a massive collision between cutting-edge hardware design and the upcoming 2027 EU Battery Regulation. The stringent mandate requires that virtually all portable batteries in consumer electronics be readily removable and easily replaceable by the end user using basic tools.

Engineering a user-serviceable battery door into the ultra-compact, lightweight frame of a smart glass poses a near-insurmountable physical engineering challenge. Attempting to comply with the strict battery mandate would fundamentally wreck the aesthetic silhouette of the frames and destroy the IP67 water resistance rating. As a result, the Ray-Ban Display is effectively banned from European entry under its current structural design. Simultaneously, the aggressive enforcement of the EU AI Act mandates rigorous, highly expensive risk-based assessments for any artificial intelligence system processing biometric data or engaging in real-time environmental monitoring on public streets.

19. The European Union Blockade

If you are residing in Europe, you currently cannot buy the flagship Meta Ray-Ban Display glasses under any circumstances. Meta has definitively paused the hardware rollout there due to intense, unrelenting regulatory scrutiny from local governments. This complete blackout is driven by massive compliance issues with the EU AI Act, ongoing punitive investigations into GDPR data violations, and an upcoming 2027 EU Battery Regulation that will mandate all consumer electronics to have user-replaceable batteries, which poses a massive, potentially unsolvable engineering hurdle for tiny smart glasses designed to look like normal fashion accessories.

20. GDPR and Data Controversies

Compounding the massive legislative hurdles are acute, highly publicized violations of the General Data Protection Regulation (GDPR). In early 2026, explosive investigative reports published by major Swedish media outlets revealed severe, systemic lapses in Meta’s supposedly secure data anonymization protocols.

The massive investigation discovered that thousands of hours of highly personal point-of-view footage captured by EU-based Ray-Ban smart glasses users had been actively transmitted to third-party data annotation facilities located in Nairobi, Kenya, operated by the contractor Sama. These annotators, tasked with manually labeling massive amounts of video data to train Meta’s Llama 4 computer vision models, reported viewing highly sensitive, totally unconsented recordings of individuals in intimate settings. Meta’s highly touted automated systems intended to blur faces and sensitive financial documents reportedly failed at alarming rates.

This massive data incident prompted immediate, highly aggressive inquiries from 35 Members of the European Parliament, citing flagrant, undeniable violations of GDPR consent and transparency requirements regarding international data transfers. The resulting massive public relations fallout generated multi-million dollar class-action lawsuits and severely damaged European consumer trust in Meta’s ambient computing ambitions.

21. Biometric Privacy Laws

In the United States, an entirely different frontier of privacy law is rapidly evolving in direct response to the powerful capabilities of the Meta Neural Band. Because the band utilizes advanced surface electromyography (EMG) to detect the electrical activity of the peripheral nervous system, it harvests physiological data that is fundamentally more intimate than standard behavioral telemetry. Neural data can potentially reveal deeply inherent characteristics of identity, cognitive state, mental well-being, and hidden physical disease markers.

In direct response to these rapid advancements in brain-computer interfaces, state legislatures have begun preemptively categorizing neural signals as highly protected sensitive information. In 2024, Colorado enacted the first massive neural data protection legislation, followed closely by California, which aggressively amended the California Consumer Privacy Act (CCPA) to specifically include data derived from the peripheral nervous system as highly sensitive biometric information. Similar aggressive legal frameworks are being heavily examined under the strict, highly punitive liabilities of the Illinois Biometric Information Privacy Act (BIPA).

Meta’s official corporate privacy policy explicitly states that the EMG signals are used strictly to translate intended gestures into digital commands and nothing else. However, legal experts and privacy advocates remain highly skeptical, arguing that aggregated neuromuscular data could eventually be reverse-engineered by advanced AI to deduce emotional states or subconscious reactions to environmental stimuli, opening the door for unprecedentedly invasive targeted advertising profiles that manipulate human behavior.

22. Social Reception and Privacy

At the consumer level, the perennial challenge of broad social acceptability remains acute and highly contentious. While the masterful integration of the technology into classic Ray-Ban frames beautifully solved the aesthetic issue, the highly discrete, almost invisible nature of the hardware actively exacerbates massive privacy fears among the general public.

The Gen 2 and Display models feature a prominent, blinking LED on the frame explicitly designed to clearly alert bystanders that continuous video recording is currently in progress. However, extensive field tests and highly critical media reviews consistently indicate that the LED is easily missed, completely ignored, or entirely washed out, especially in bright daylight. Furthermore, malicious users have rapidly disseminated massive online tutorials detailing exactly how to physically obscure or electronically disable the recording light using simple electrical tape or highly complex aftermarket modifications, bypassing the primary safety feature entirely.

The Electronic Frontier Foundation (EFF) has issued incredibly stark, highly critical warnings regarding the dangerous societal normalization of face-mounted dashcams. The EFF powerfully highlights that innocent bystanders cannot possibly opt out of being continuously indexed by Meta’s incredibly powerful multimodal algorithms, as footage is automatically imported to the Meta AI mobile app, where it is instantly subjected to heavy cloud-based AI processing that feeds directly into Meta’s massive global data infrastructure without consent.

23. Privacy and Facial Recognition Pushback

Meta is currently facing severe, highly coordinated pushback from major privacy advocates regarding the incredibly powerful future capabilities of their optical sensors. Recently, over 70 massive civil society organizations, including the ACLU and the Electronic Frontier Foundation, issued a highly critical formal letter urging Meta to completely and permanently halt the development of advanced facial recognition features for their smart glasses. They specifically called out a highly rumored, incredibly controversial Name Tag feature that would theoretically allow wearers to automatically identify strangers they look at by rapidly matching their faces to massive public social media profiles in real-time, effectively destroying the concept of public anonymity.